By: Ralf Ellspermann

25-Year, Multi-Awarded BPO Veteran

Published: 20 March 2026

Updated: March 16, 2026

TL;DR: The Key Takeaway

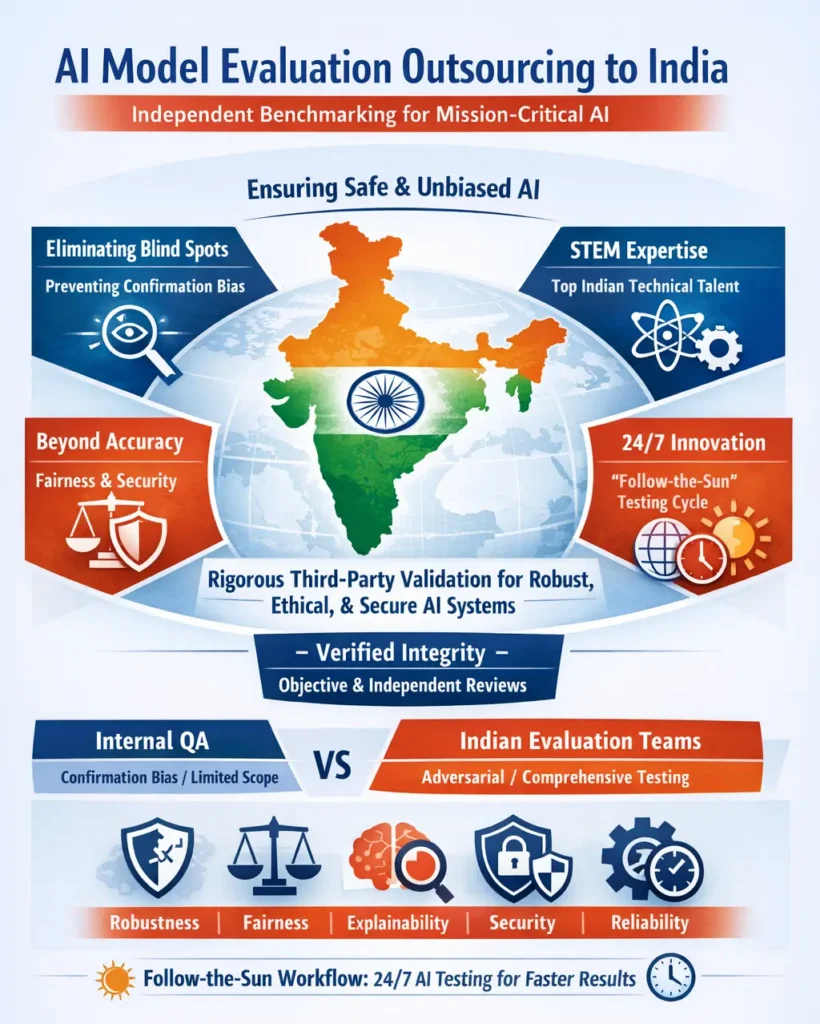

AI model evaluation outsourcing to India provides a critical, independent layer of validation, ensuring that complex AI systems are not just algorithmically sound but also safe, unbiased, and aligned with real-world performance benchmarks. The nation’s deep expertise transforms evaluation from a final-step quality check into a continuous, strategic discipline for achieving model integrity.

As artificial intelligence shifts from experimental tools to the backbone of global enterprise, the necessity for impartial, third-party validation has reached a critical boiling point. Relying on internal testing alone is no longer a viable strategy for high-stakes deployments. Today, the most resilient tech leaders are leveraging India’s elite STEM ecosystem to provide rigorous, adversarial benchmarking, ensuring their models are not only accurate but ethically sound and secure.

- Eliminating Blind Spots: Independent verification prevents the inherent confirmation bias that often plagues internal development teams.

- Specialized Expertise: India’s premier technical institutes provide the mathematical depth required to stress-test complex neural networks.

- Beyond Accuracy: Evaluation now encompasses a 360-degree view of robustness, explainability, fairness, and security.

- 24/7 Innovation: Significant time-zone advantages allow for a “follow-the-sun” testing model that cuts development cycles in half.

- Verified Integrity: Cynergy BPO streamlines the process of securing top-tier Indian specialists who provide the objective data needed for safe AI scaling.

The Critical Need for Independent Verification

There is a long-standing rule in high-stakes engineering: never grade your own work. In the realm of artificial intelligence, this has become a non-negotiable directive. When the same team that builds a model is also tasked with validating it, a dangerous “echo chamber” effect can occur. Developers, intimately familiar with their training data, may inadvertently design tests that avoid the model’s known weaknesses, leading to a false sense of security.

Deploying an unvetted model into the wild—especially in sensitive sectors like autonomous transit, medical diagnostics, or high-frequency trading—carries immense risk. A model that performs flawlessly in a lab might crumble when faced with “noisy” or unexpected real-world data. Engaging an external evaluation partner in India introduces a necessary layer of objectivity. These specialists approach the algorithm with an adversarial mindset; their goal isn’t to prove it works, but to discover exactly where it breaks.

India’s Infrastructure for Verifiable AI Trust

India’s dominance in the AI evaluation space is the result of a massive, long-term investment in technical education and process-driven excellence. The country’s premier institutions, such as the IITs and IISc, produce a steady stream of data scientists who possess the advanced statistical skills needed for forensic model auditing.

This human capital is bolstered by a world-class IT infrastructure that has spent decades managing mission-critical operations for the world’s largest corporations. This isn’t just about running scripts; it’s about designing custom benchmarking environments and sophisticated validation strategies. With total English proficiency and deep cultural alignment with Western markets, Indian evaluation teams act as a high-IQ extension of a US company’s domestic office.

“Our partners are moving beyond simple quality checks; they are demanding a foundation of verifiable trust. They need to prove to boards and regulators that their AI is robust, fair, and resilient. Outsourcing these evaluations to India provides a level of analytical depth that is simply unavailable elsewhere, transforming validation from a hurdle into a massive competitive advantage.” — John Maczynski, CEO, Cynergy BPO

Internal QA vs. Independent Indian Evaluation

| Evaluation Dimension | Internal Development Team | Independent Indian Partner |

| Primary Mindset | Confirmatory (Prove it works) | Adversarial (Find the failure) |

| Testing Scope | Familiar data & known use cases | Edge cases & “out-of-distribution” data |

| Bias Risk | Vulnerable to developer blind spots | Objective and independent perspective |

| Talent Level | Generalist QA staff | Specialized STEM/AI researchers |

| Business Value | Basic functionality check | Risk mitigation & regulatory assurance |

Evolving the Scorecard: A Holistic Framework of Trust

The industry has moved past the era where “accuracy” was the only metric that mattered. A model can be 99% accurate but still be fundamentally dangerous if it is biased or easily manipulated. Today’s sophisticated evaluation frameworks—perfected within the Indian IT-BPM sector—analyze a model across four critical pillars:

- Robustness: How does the model handle corrupted, noisy, or unexpected data?

- Fairness: Does the model produce discriminatory outcomes for specific demographics?

- Explainability: Can a human expert actually understand the “why” behind a model’s decision?

- Security: Is the system resilient against adversarial attacks designed to trick the AI?

The 2026 AI Evaluation Scorecard

| Pillar | Techniques Applied | Business Significance |

| Performance | Precision, Recall, F1-Score | Efficiency and raw predictive power. |

| Reliability | Stress-testing, noise injection | Performance under real-world pressure. |

| Equity | Disparate impact analysis | Protection against legal and ethical fallout. |

| Interpretability | SHAP and LIME methodologies | Regulatory compliance and user trust. |

| Resilience | FGSM and PGD adversarial attacks | Defense against malicious manipulation. |

The Strategic Advantage of “Follow-the-Sun” Testing

For North American AI firms, the time-zone difference with India isn’t a hurdle—it’s a superpower. By establishing a “follow-the-sun” validation cycle, companies can effectively double their productivity.

When a US-based team pushes a new model version at 6:00 PM, the Indian evaluation team is just starting their workday. They spend the next eight hours subjecting the model to rigorous stress tests. By the time the US developers return to their desks the following morning, a comprehensive report detailing every failure mode and performance metric is already waiting in their inbox. This seamless hand-off eliminates downtime and allows for an aggressive iteration pace that competitors simply cannot match.

Expert FAQs

Why is AI validation harder than regular software testing?

Traditional software is “if-then” logic; it is predictable. AI is probabilistic, meaning it learns patterns from data. A model might look perfect during training but exhibit bizarre behavior when it sees something new. Independent evaluation is the only way to catch these unpredictable failure modes.

What makes Indian engineers specifically suited for this?

The Indian education system places an extraordinary emphasis on pure mathematics and statistics. This creates a workforce that doesn’t just “use” AI tools but understands the underlying calculus of neural networks. This depth is essential for the “adversarial” thinking required to break a model.

How is my IP and proprietary data protected?

Elite Indian providers operate in air-gapped, highly secure environments that are ISO 27001 and SOC 2 compliant. They adhere to the strictest global standards, including GDPR and CCPA, ensuring your models and datasets are protected by the same level of security found in top-tier global banks.

Can these teams handle massive Large Language Models (LLMs)?

India’s IT sector is built for massive scale. Whether it’s Reinforcement Learning from Human Feedback (RLHF) or red-teaming a trillion-parameter model, Indian firms can rapidly deploy hundreds of specialized researchers to audit LLMs for hallucinations and safety at a scale that is cost-prohibitive in the US.

Unlock cost-efficient growth with expert BPO guidance!

Partner with Cynergy BPO to connect with top outsourcing providers.

Streamline operations, cut costs, and scale your business with confidence.

Ralf Ellspermann is the Chief Strategy Officer (CSO) of Cynergy BPO and a globally recognized authority in business process and contact center outsourcing. With more than 25 years of experience advising enterprises and SMEs, he provides strategic guidance on vendor selection, CX optimization, and scalable outsourcing strategies across global markets. His expertise spans fintech, ecommerce and retail, healthcare, insurance, travel and hospitality, and technology (AI & SaaS) outsourcing.

A frequent speaker at leading industry conferences, Ralf is also a published contributor to The Times of India and CustomerThink, where he shares insights on outsourcing strategy, customer experience, and digital transformation.