By: Ralf Ellspermann

25-Year, Multi-Awarded BPO Veteran

Published: 1 April 2026

Updated: March 25, 2026

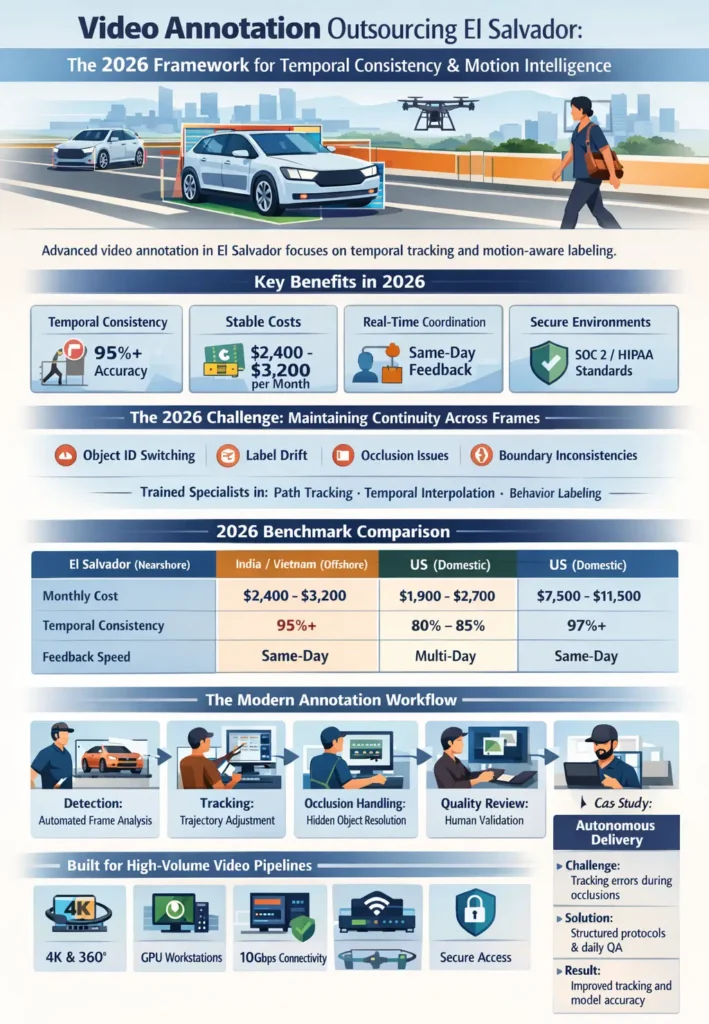

Video annotation outsourcing in El Salvador has advanced into a specialized discipline focused on motion accuracy, continuity, and frame-level consistency.

In 2026, training video-based AI is no longer about labeling isolated frames—it is about preserving object identity, trajectory, and behavior across time. This requires a combination of technical discipline, structured workflows, and close coordination with model teams.

El Salvador has emerged as a nearshore environment well-suited for this work, offering real-time collaboration, high-throughput infrastructure, and consistent annotation quality for complex video datasets.

30-Second Executive Briefing

- Temporal Consistency: Teams specialize in multi-frame tracking, interpolation, and identity persistence, reducing flicker and label instability.

- Stable Cost Structure: Fully loaded monthly costs typically range from $2,400 to $3,200 per annotator, supporting predictable project planning.

- Real-Time Coordination: CST alignment allows for same-day feedback, guideline updates, and quality calibration.

- High-Throughput Infrastructure: Facilities support 4K, 360°, and multi-sensor video pipelines without bottlenecks.

- Secure Environments: Controlled-access delivery models ensure sensitive video data remains protected.

The 2026 Challenge: Maintaining Continuity Across Frames

The primary difficulty in video annotation is no longer detection—it is consistency over time.

Common failure points include:

- Object ID switching during occlusion

- Label drift across frames

- Inconsistent bounding or segmentation boundaries

- Misinterpretation of motion or intent

These issues can significantly degrade model performance.

El Salvador addresses this by training specialists in:

- Path-based annotation

- Temporal interpolation

- Behavior-aware labeling

This shifts annotation from static tagging to motion-aware data structuring.

2026 Benchmark Comparison: Consistency vs. Throughput

For video pipelines, performance is measured by temporal stability and feedback speed, not just labeling volume.

| Metric | El Salvador (Nearshore) | India/Vietnam (Offshore) | US Domestic |

| Fully Loaded Monthly Cost | $2,400 – $3,200 | $1,900 – $2,700 | $7,500 – $11,500 |

| Temporal Consistency | 95%+ | 80% – 85% | 97%+ |

| Time Zone Alignment | CST (Real-Time) | +12+ Hour Lag | Native |

| Feedback Cycle Speed | Same-Day | Multi-Day | Same-Day |

| Connectivity | 10Gbps Redundant | Variable | Tier 1 |

| Security Standards | SOC 2 / HIPAA / ISO-aligned | Moderate | Tier 1 |

Faster feedback loops and higher temporal consistency reduce retraining cycles and improve model stability.

The Modern Video Annotation Workflow

Video annotation in 2026 relies on a layered workflow combining automation with human review.

| Stage | System Role | Human Role (El Salvador Team) |

| Frame Detection | Initial object detection | Validation and correction |

| Tracking | Automated trajectory prediction | ID consistency and adjustment |

| Occlusion Handling | Flagging uncertain frames | Resolving hidden object continuity |

| Temporal QA | Pattern checks across frames | Final validation |

| Dataset Refinement | Model updates | Guideline tuning |

This approach ensures:

- Stable object tracking

- Reduced flicker

- Consistent dataset structure

Infrastructure: Built for High-Volume Video Pipelines

Video annotation requires environments capable of handling large files, continuous streams, and intensive processing workloads.

Technical Environment (2026)

| Component | Capability | Impact |

| Connectivity | Dedicated high-speed fiber + 5G backup | Smooth streaming of large video datasets |

| Processing | GPU-enabled workstations | Efficient handling of high-resolution frames |

| Security | Zero-trust access environments | Protection of sensitive video data |

| Power | Redundant systems with backup | Continuous operations |

| Work Model | Secure on-site delivery | Compliance with restricted data requirements |

These systems support real-time annotation workflows without delays or data exposure risks.

Vertical Specialization: Key Video Annotation Domains

El Salvador’s annotation teams are organized into specialized pods, improving performance in specific use cases:

Autonomous Mobility

- Multi-camera synchronization

- Object tracking across dynamic environments

Security & Smart Infrastructure

- Behavior analysis

- Anomaly detection in public spaces

Sports & Media

- Motion tracking

- Player and object recognition

Medical Video Analysis

- Procedure annotation

- Instrument tracking in surgical footage

Case Study: Improving Tracking Accuracy in Autonomous Delivery Systems

The Challenge:

A robotics company faced inconsistencies in object tracking, particularly when pedestrians moved behind obstacles, causing errors in model predictions.

The Approach:

A nearshore video annotation team in El Salvador implemented:

- Structured tracking protocols

- Daily quality reviews

- Real-time communication with engineering teams

The Outcome:

- ID-switch errors dropped significantly, improving dataset stability

- Iteration cycles accelerated, reducing time between labeling and training

- Model accuracy improved, enabling faster deployment

Key Insight:

Maintaining object continuity across frames was more impactful than increasing annotation speed.

Strategic Implementation: Building a Stable Video Annotation Pipeline

Prioritize Temporal Accuracy

Focus on:

- Consistent object IDs

- Smooth transitions across frames

- Reliable handling of occlusion

Enable Rapid Feedback Cycles

Nearshore collaboration allows:

- Immediate guideline updates

- Faster correction of errors

- Continuous quality alignment

Segment Workflows by Complexity

Separate:

- Automated tracking tasks

- Human-reviewed edge cases

This improves both efficiency and output quality.

Frequently Asked Questions (FAQs)

Can teams handle high-resolution video datasets?

Yes. Many providers support 4K, multi-camera, and 360° video workflows using high-performance systems.

How is temporal consistency maintained?

Through structured tracking protocols, QA layers, and continuous validation across frames.

Is it possible to work in real time with annotation teams?

Yes. Nearshore alignment allows for live communication and rapid iteration during active development cycles.

How is sensitive video data protected?

Secure environments and controlled access systems ensure that data remains protected throughout the process.

What makes El Salvador suitable for video annotation?

Its combination of real-time collaboration, consistent output quality, and strong communication improves both efficiency and model performance.

Unlock cost-efficient growth with expert BPO guidance!

Partner with Cynergy BPO to connect with top outsourcing providers.

Streamline operations, cut costs, and scale your business with confidence.

Ralf Ellspermann is the Chief Strategy Officer (CSO) of Cynergy BPO and a globally recognized authority in business process and contact center outsourcing. With more than 25 years of experience advising enterprises and SMEs, he provides strategic guidance on vendor selection, CX optimization, and scalable outsourcing strategies across global markets. His expertise spans fintech, ecommerce and retail, healthcare, insurance, travel and hospitality, and technology (AI & SaaS) outsourcing.

A frequent speaker at leading industry conferences, Ralf is also a published contributor to The Times of India and CustomerThink, where he shares insights on outsourcing strategy, customer experience, and digital transformation.